Share this:

For the past several years, the dominant question around AI in the enterprise was simple: are we using it?

Budgets were approved, initiatives were launched, and teams were told to figure it out. That approach made sense in the early days of adoption. Getting started was the priority, and the expectation was that value would follow.

That era is ending.

The conversation happening in boardrooms and finance teams right now is fundamentally different. It’s no longer enough to know that AI is being used. The question is whether the investment is actually working, and more importantly, how you prove it.

From Spend to Value

Here’s where most organizations find themselves today: they can tell you roughly what they spent on AI last month. What they can’t tell you is which projects consumed what, which business units are driving costs, and whether the value generated justifies the spend.

That gap matters. A lot.

Tracking AI spend at the organizational level is a starting point, but it doesn’t give you the visibility to make good decisions. To really understand ROI, you need spend broken down by project, by team, by use case, and then mapped back to the outcomes those investments are producing. Without that, AI budgets are essentially operating on faith.

The Foundation Matters More Than People Realize

One thing that often gets overlooked in the AI value conversation is how much it depends on the quality of your underlying cost data.

If your cloud and data center resources aren’t properly tagged and attributed, the assumptions feeding into your AI value mapping are going to be shaky from the start. You can’t accurately assess what an AI workload is costing you if the infrastructure supporting it isn’t clearly identified and allocated. Garbage in, garbage out.

Organizations that have invested in consistent cost tagging and resource-level visibility across their environments are finding that it pays dividends well beyond traditional FinOps use cases. That same discipline becomes the foundation for understanding AI costs at the granularity needed to connect them to business outcomes. Better tagging means better attribution. Better attribution means better assumptions. And better assumptions mean the value conversation with leadership actually holds up under scrutiny.

The SaaS Wake-Up Call

One pattern that’s starting to get attention is what’s happening inside SaaS products. Vendors across the industry are embedding AI into their platforms to improve the customer experience, which sounds like a straightforward win. But some of these companies are discovering that their AI infrastructure costs are approaching, or in some cases matching, what their customers are actually paying for the product.

The economics may still work out in certain cases. AI-powered features can drive retention, expansion, and differentiation that more than offset the cost. But organizations need to know where the break point is. At what level of spend does the value stop justifying the investment? Without that visibility, you’re not making a strategic decision. You’re just absorbing cost and hoping the math works out.

GPUs Are the Next Frontier

Tokens are one dimension of AI spend. GPUs are the other.

Whether organizations are running GPU workloads in the cloud or investing in on-premises infrastructure, the ability to forecast and manage GPU consumption is quickly becoming a core operational need. The same principles that apply to forecasting compute and memory usage need to be extended to GPUs: understanding recent usage patterns, projecting future demand based on planned initiatives, and having the flexibility to adjust those models as circumstances change.

This isn’t a new problem conceptually. It’s a familiar infrastructure challenge applied to a new and fast-moving resource type. But the pace at which GPU demand is growing, driven largely by AI workloads, means that organizations without a forecasting approach already in place are falling behind quickly.

What Good Looks Like

The organizations getting ahead of this aren’t necessarily the ones with the largest AI budgets. They’re the ones applying the same financial discipline to AI that they apply to every other area of infrastructure investment.

That means visibility at the project and business unit level, not just the organizational level. It means consistent cost tagging across cloud and data center resources so that AI workload costs can actually be traced and trusted. It means connecting spend to outcomes in a way that can be communicated to leadership. It means forecasting GPU and token consumption with enough lead time to make informed decisions. And it means knowing, with some confidence, where the value is and where it isn’t.

AI is not going to get simpler or cheaper. The organizations that build the accountability structures now will be in a much stronger position when the next wave of investment decisions arrives.

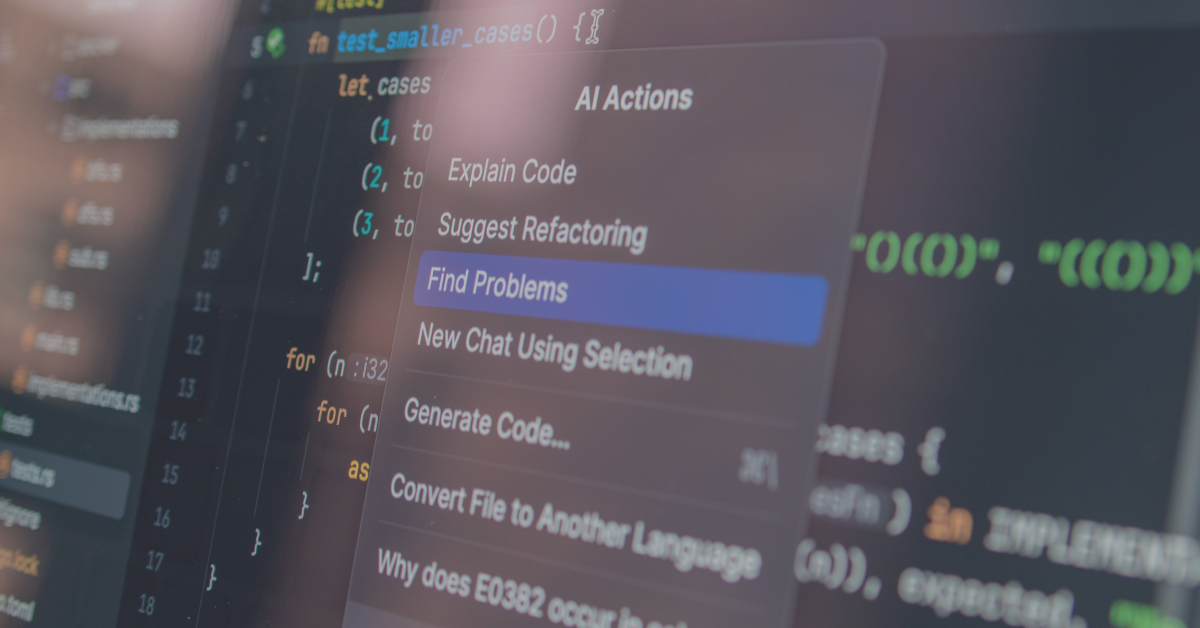

Getting accurate visibility at the project and business level is paramount to the success of your value mapping process. Take a look at a tooling option that can provide that visibility.

Getting there is a process, not a flip of a switch. But organizations that treat infrastructure visibility as a prerequisite to AI accountability, rather than an afterthought, tend to find that the investment pays off well beyond the AI use case. It becomes the connective tissue between technology spend and business outcomes across the board